Happy new year all! I figured I would start off the year with a procedure I’ve used successfully to migrate View pools from one cluster to another, with completely disparate storage. One official answer from VMware when you start searching on this is a rebalance.

Migrating Linked clone pools to a different or new Datastore (1028754)

But what does a rebalance really do again?

The best quick definition comes from the the pubs:

A desktop rebalance operation evenly redistributes linked-clone desktops among available datastores. If possible, schedule rebalance operations during off-peak hours.

So that helps us if we add new datastores to an existing cluster, but it doesn’t really help us if we need to move our stateless View environment onto new servers and storage altogether. I used this procedure with a customer recently and it worked great. I’m sure there are many other minor variations on how to do this, but this method was done purely with native commands in the View admin interface, no PowerCLI scripting required.

Here are a few important notes to keep in mind:

- This method assumes that the linked clone desktops are completely stateless and can be refreshed without impacting end user functionality or data.

- The new cluster must use the same vCenter server as the original pool, as Moving View-managed desktops between vCenter Servers is not supported (1018045).

- During my testing on View 5.1.1 744999, I experienced that if you simply changed the pool configuration so that the new cluster and storage are selected, then attempt to perform a rebalance, linked clone desktops will start getting moved over before a replica is created on the new cluster, resulting in some VMs that can power on, but have no OS. This may have been a fluke during testing, but seeing it once was enough to put a bad taste in my mouth for that method.

- If you are migrating from one cluster to another that has even slightly different build versions of ESXi, you may need a gold image update for the VMware tools and to ensure users don’t receive any unexpected hardware messages, specifically with XP. The best way I found to do this is to clone the existing gold image over to the new cluster and start a new gold image for the pool. Remember that when you clone a VM, all the snapshots will be nuked on the newly created copy of the VM, so be prepared to lose your snapshot tree if this is the case.

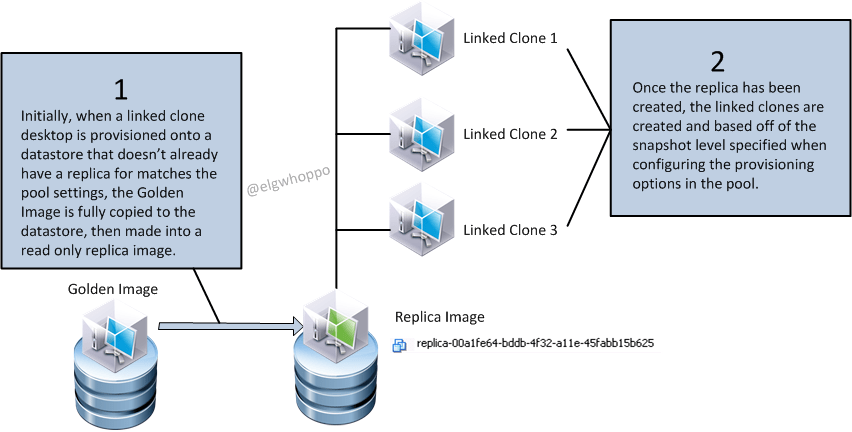

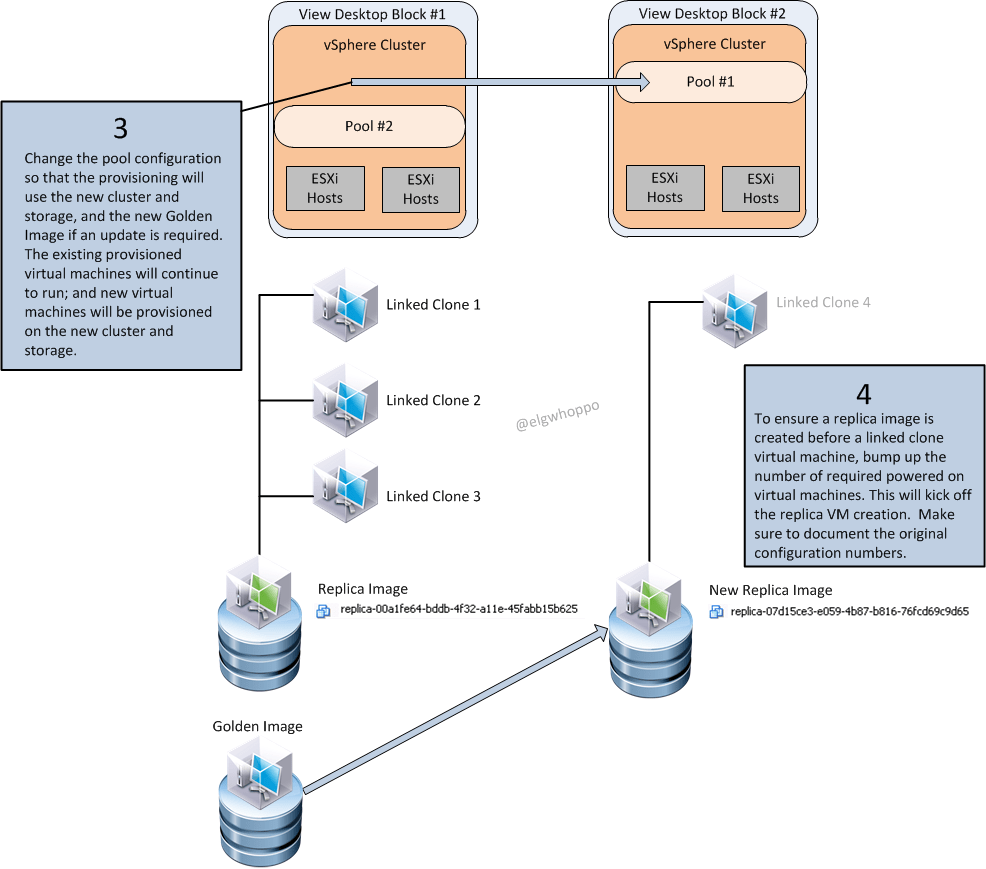

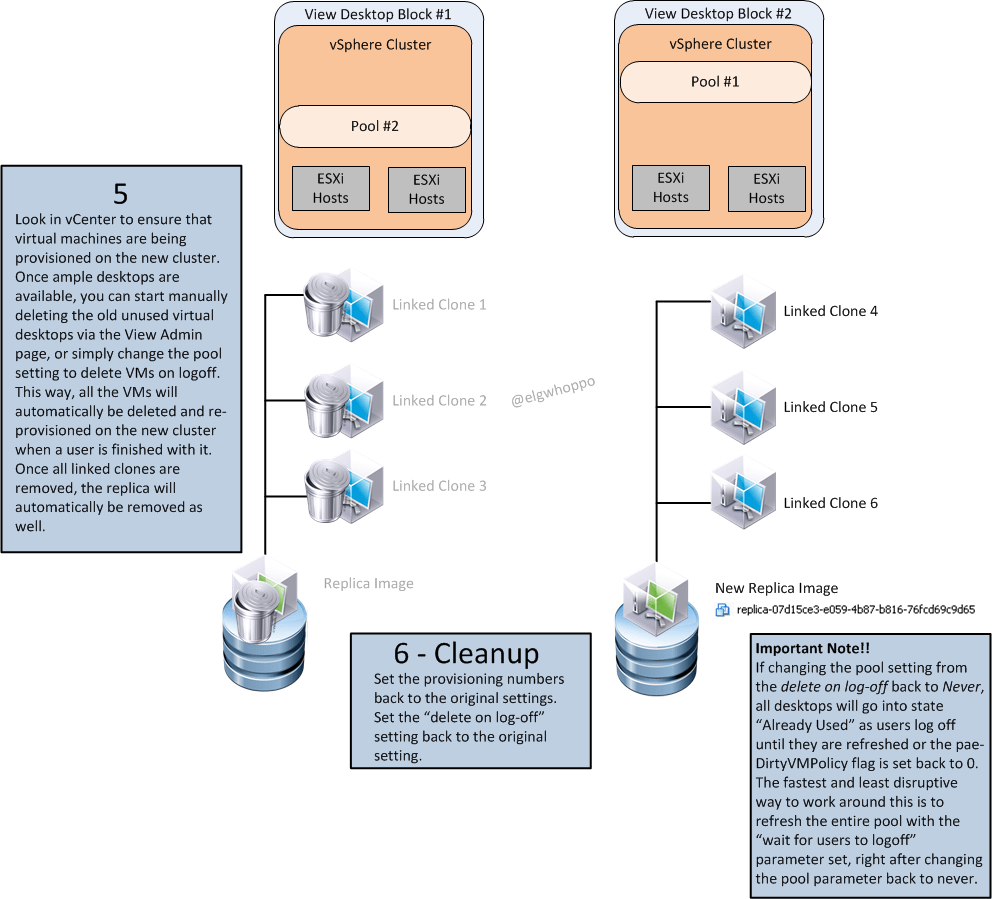

With those two points in mind, here is a nice visual “visio’d whiteboard session” with steps to follow that worked for me. It’s nothing really advanced, and it’s just nice to see it laid out in a manner that makes sense.

Remember that before starting step three, you should have confirmed whether or not your golden image will require an update once located on the new cluster. You should also document the pool provisioning settings before starting so that they can be set back to the original parameters when finished. With step 4, the point is, you need the pool to provision at least a single new VM. So bump up your provisioning numbers or delete existing provisioned VMs to make that happen.

Hope this helps you fellow View administrators! All feedback, and constructive criticism are welcomed here at elgwhoppo.com, as I only care about having the correct information. See you all around and about and have a happy 2014!