There are a lot of blog posts on how to set up the cool new Virtual SAN, but not a lot on how to destroy the Virtual SAN object. Probably because it’s really simple. Never fear! In a shameless effort to drive hits from google, here is your step by step on how to delete the vsanDatastore.

Deleting the vsanDatastore means that all the data on the underlying disk groups will be nuked. Gone. Vamoosed. This means you must either migrate the VMs to separate available local storate or to another storage solution. You can delete a disk group from one of the nodes, then reformat the disks into VMFS volumes, but you will obviously lose fault tolerance when you move to that. But hey, you’re the one deleting the virtual SAN object, not me. You should know why you’re doing it and what you’re moving to. I had to do this because I needed to nuke and refresh my cluster on 5.5. U1 from the beta refresh.

1. Migrate all the VMs and templates elsewhere. Where that is, well that is your problem. You can verify if there are any objects remaining by examining the related datastore object here.

2. Turn off HA on the Virtual SAN enabled cluster. You’ll need this to be off to turn off the Virtual SAN feature.

2. Turn off HA on the Virtual SAN enabled cluster. You’ll need this to be off to turn off the Virtual SAN feature.

3. Delete all the disk groups individually. We will get warned that we should put it in maintenance mode first if we’re performing maintenance, but since we’re nuking the sucker we don’t want to do that.

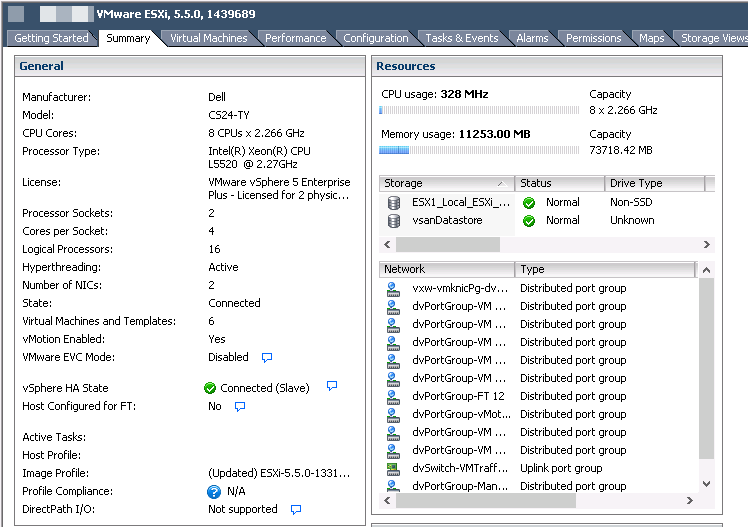

3. We can see the local disks become available in the add storage wizard for each host as we delete the disk groups.

4. Repeat the process until all the disk groups have been deleted.

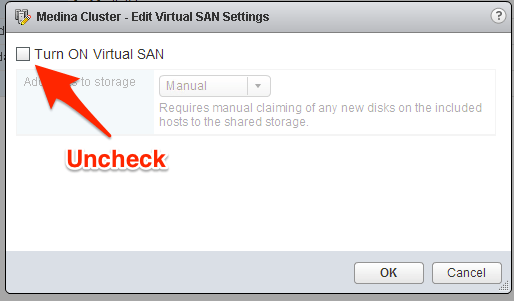

5. We are now ready to turn off the VSAN Feature on the cluster object.

6. Click OK on the warning.

7. Re-enable HA on the cluster. All done!